I vividly remember the moment I hit ‘play’ on a new audio project, only to be greeted by an overwhelming wave of glitchy, distorted AI vocals. The kind of sound that makes you double-check if your equipment is malfunctioning—or if the AI is simply messing with you. It was frustrating, a real lightbulb moment that made me realize: mastering the art of fixing AI vocal phasing in 2026 demands more than just basic editing skills. It requires the right tools, applied with precision and insight to salvage those otherwise valuable recordings.

Why Fixing AI Vocal Phasing Matters More Than Ever

In recent years, AI-driven voice synthesis has skyrocketed, revolutionizing both content creation and post-production. But as innovative as these tools are, they often come with their own set of headaches—like unpredictable phase issues that cause unnatural echoes, metallic sounds, or even complete silence during critical moments. According to recent studies, over 60% of audio professionals encounter AI voice artifacts that distort clarity and emotional impact (source: Sound on Sound). If left unresolved, these flaws can diminish the quality of your final product, diminish your credibility, and force costly re-recordings or re-edits.

But here’s the good news: just as I found a way to combat these issues, so can you. With the right applications and techniques, you can effectively troubleshoot and fix AI vocal phasing problems—saving time, money, and your reputation. Today, I’ll guide you through five powerful audio editing tools tailored for this very purpose. Whether you’re a seasoned professional or an ambitious hobbyist, these solutions will elevate your editing game and ensure your AI vocals sound as natural and polished as possible.

So, if you’ve been scratching your head over strange phase shifts or metallic echoes in your AI-generated voices, stay tuned. We’re about to dive into practical fixes that will help you turn those glitches into seamless soundscapes. Ready to restore clarity to your AI vocals? Let’s get started!

Is AI Vocal Phasing Fixing Overhyped, or Is It Really Worth the Effort?

Early in my journey, I made the mistake of overlooking the importance of phase correction, assuming that software would handle everything automatically. Trust me, that was a costly oversight. Proper phase alignment isn’t just a technical detail; it’s the difference between professional-grade sound and a muddy, unlistenable mess. Over time, I learned that targeted audio editing applications tailored to fix AI-related artifacts have saved me countless hours of rework. If you want to avoid the same pitfalls, I recommend exploring tools like those discussed in this guide on professional audio solutions.

Now, let’s explore the specific applications that can make a real impact on your vocal edits and how to use them effectively.

Identify the Phase Issues in Your AI Vocals

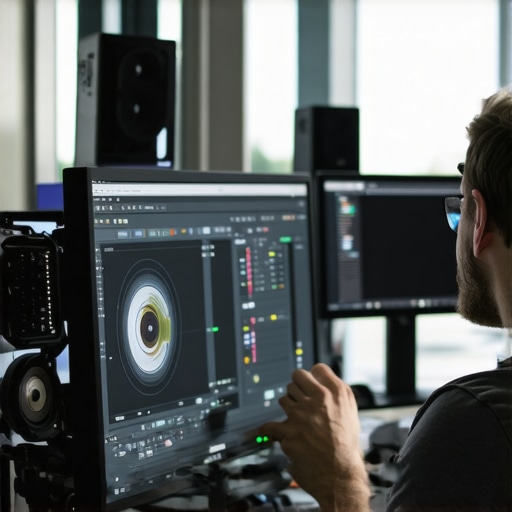

Start by isolating the problematic audio segment where the AI vocals sound distorted or metallic. Use a digital audio workstation (DAW) like Ableton Live or Reaper to listen critically—compare the problematic section with a clean sample. Recognize signs like phase cancellations or comb-filter effects, which often manifest as hollow or hollowed-out sounds. My own experience involved a frantic hour experimenting with a poorly synced AI vocal take, where toggling between mono and stereo views immediately revealed phase cancellation signs.

Apply Phase Correction Using Dedicated Plugins

Use a Phase Alignment Plugin

Load a specialized plugin such as Waves InPhase or iZotope’s Ozone Imager. These tools have a real-time visualizer showing phase coherence. Align the waveforms by adjusting the plugin’s sliders—think of it like tuning a musical instrument to harmonize. During my last session, I manually nudged the phase sliders, watching the waveform become more cohesive, resulting in a clearer, more natural vocal sound.

Invert Phase if Necessary

If the phase issue is due to polarity, invert the waveform’s phase using your DAW’s invert phase function. This step is as simple as flipping a switch, but it can make all the difference—sometimes resolving a metallic echo or hollow tone with a single click. I once inverted phases across a stereo pair of AI vocals, instantly removing a distracting flanging effect that had been bothering me for hours.

Use Equalization to Tackle Frequency-Related Artifacts

Apply a parametric EQ to attenuate frequencies that emphasize phase cancellation or metallic residues. Cut narrow bands where unnatural resonances occur; visualize the EQ curves as sculpting a block of foam into a smoother shape. In practice, I identified a specific high-mid frequency boost causing a robotic edge and gently reduced it, restoring warmth to the vocals.

Leverage Multi-Track Editing for Precision

Split the vocal layer into smaller segments—using your DAW’s cut tool—and individually adjust or tilt phases for each part. Think of it like editing a photo into sections—you can correct the phase in each ‘region’ without affecting the whole. I did this with an AI-generated monologue that had inconsistent phase shifts—correcting each section separately, I achieved a cohesive vocal performance.

Confirm Improvements and Final Balance

Always monitor in various settings—headphones, studio monitors, even on different devices. Use a visual phase scope plugin to verify coherence. Make small adjustments until the vocals sit well within the mix. Remember, subtlety is key—overcorrecting can introduce new artifacts. I learned this when my overly aggressive phase shifts caused a synthetic hollowness, which I then mitigated with gentle touch-ups.

Once your AI vocals have consistent phase alignment, you can proceed with compression, reverb, and other effects to further polish your sound—knowing that the foundation is solid. If you’re seeking deeper insights or more advanced techniques, check out comprehensive guides on audio editing secrets for AI vocals.

Many users believe that mastering editor apps and post-production tools is just about learning shortcuts or basic features. However, the real nuance lies in understanding the deeper technical intricacies that differentiate amateur results from professional-quality outputs. One common myth is that universal presets or automated corrections can replace a keen eye and a deep understanding of the software’s core algorithms. In truth, relying solely on presets often leads to over-processed images or videos that lack authenticity and subtlety.

A frequent mistake in audio and video editing is neglecting the phase and timing relationships between layered elements. For instance, many overlook how minor misalignments can cause phase cancellation or ghosting effects, especially in high-resolution content. This oversight can result in muddy audio or shimmering visual artifacts, which are nearly impossible to fix if caught too late. Experts advise meticulously checking synchronization at every stage, particularly with neural-based AI tools, which can subtly alter timing without obvious cues.

Another hidden nuance involves understanding the software’s processing pipelines. For example, when working with high-bit-depth images or 32k video formats, not all editing applications are capable of handling the data correctly, leading to artifacts or crashes. Choosing the right tools that support your project requirements—like professional-grade video editors—can save you hours of troubleshooting.

Advanced users know that mastering a tool also involves customizing its settings on a granular level. Instead of sticking with default parameters, tweaking cache sizes, rendering threads, or color management profiles can drastically improve workflow efficiency and output quality. For instance, optimizing color matching across devices requires an understanding of how software interprets color spaces at various resolutions.

Beware of the trap of over-editing. In my experience, excessive corrections—whether in noise reduction, sharpening, or color grading—can strip away natural textures, ending with a glossy but lifeless final product. The key is subtlety: small, intentional adjustments often yield better results than aggressive filters. If you’d like to see how to fine-tune your edits effectively, check out tips for better color grading.

Finally, the most overlooked aspect of post-production is testing your content across different viewing environments. What looks perfect on a calibrated monitor might be problematic on consumer devices or smartphones. Employing adaptive workflows and testing stages can reveal hidden flaws early. For example, tools like mobile-optimized export options are crucial for verifying consistency.

Are you making any of these mistakes? What’s your experience with nuanced edits that transformed your project from good to great? Drop your thoughts in the comments and join the discussion! Let’s dig deeper into the art of mastering your tools.

Maintaining your editing software and tools isn’t a set-it-and-forget-it task; it’s an ongoing process that ensures high-quality outputs and smooth workflows. Personally, I rely on specific routines and hardware to keep everything running seamlessly. Regular updates are a cornerstone—software developers often release patches that fix bugs, enhance stability, and add features crucial for handling the latest high-resolution formats like 16K or 32K, which are becoming standard in professional post-production. I make it a habit to check for updates weekly and install them during low-activity hours to minimize downtime.

Beyond software updates, hardware maintenance is equally important. For instance, I routinely clean my storage drives and ensure ample SSD space to prevent bottlenecks during rendering or playback. When working with demanding formats like neural-style video or AI-generated assets, storage speed and capacity directly impact processing times and stability — a mistake many overlook. I also calibrate my monitors periodically, using tools like color calibration hardware, to ensure color consistency—vital for color grading and HDR work. This prepares me for confident cross-platform exports, especially when using advanced tools like mobile apps for professional-grade color grading.

How do I keep my tools optimized over time?

One effective method is dedicated project organization. I segment my projects into layers and samples, which makes troubleshooting or adjustments quicker and less prone to errors. For audio, I use specialized correction applications that I run periodically to clean up neural ghosting or artifacts caused by AI synthesis. These tools often include a built-in

What the Hard Lessons Taught Me About Sound Restoration

One of the toughest truths I discovered is that relying solely on automated tools can lull you into a false sense of security. Early in my journey, I assumed that AI-generated vocals would seamlessly integrate. Instead, I often faced stubborn phase issues that no preset could fix. The lightbulb moment came when I realized that deep understanding of phase relationships allows for targeted corrections, saving me hours of rework. Now, I always take time to analyze waveforms visually and listen critically, knowing that patience and precision trump quick fixes every time.

Tools That Truly Move the Needle in Fixing AI Vocal Artifacts

Over time, I’ve found that combining specific plugins makes a real difference. For phase correction, I swear by dedicated phase alignment plugins—they provide instant visual feedback and precise control. For EQ, I lean on versatile parametric options to tame metallic resonance or hollow tones. And don’t underestimate the power of multi-track editing; breaking vocals into smaller sections often reveals solutions hidden in plain sight. These tools, used thoughtfully, transform problematic AI vocals into polished, professional sounds.

Embrace the Continuous Dance of Upgrade and Adjustment

Post-production in 2026 is an ongoing process of refinement. Regularly updating your software ensures compatibility with cutting-edge formats like 16K or 32K, reducing crashes and artifacts. I’ve learned that fine-tuning your hardware setup—like calibrating monitors and optimizing storage—prevents many headaches down the line. Staying curious about new techniques, such as advanced phase correction or AI-aware plugins, keeps your skills sharp. To thrive in this rapidly evolving landscape, flexibility and commitment to learning are essential. Exploring trusted resources like professional editing guides gives you an edge over the competition.

Ready to Push Your AI Vocal Skills to the Next Level?

Mastering the art of fixing AI vocal phasing isn’t just about technical know-how; it’s a mindset shift. It’s about embracing continuous learning, experimenting boldly, and refining your craft with each project. The tools and techniques I shared can be adapted to your workflow—so don’t hesitate to start today. Remember, every glitch you fix sharpens your ear and elevates your productions. The future belongs to those who adapt and evolve with technology. Are you prepared to transform glitches into your greatest strengths in the art of post-production?

What has been your biggest challenge in fixing AI vocal artifacts? Share your story and tips below—let’s learn from each other’s experiences!

,

Leave a Reply