Have you ever spent hours tweaking audio in your editing software, only to be frustrated by subtle hiss, unnatural echoes, or artifacts that refuse to disappear? That lightbulb moment when you realize your high-end setup is being sabotaged by overlooked glitches can be downright disheartening. I remember countless late nights chasing the perfect sound, only to be met with persistent issues that seemed immune to standard fixes. Sound familiar? If so, you’re not alone—and there’s good news. Today, I want to share how Clean 2026 Neural Reverb and its four essential audio editing applications changed my workflow, saving me time and delivering studio-quality results even on challenging projects.

Why Adequate Audio Repair Tools Matter More Than Ever

In professional production, impeccable sound isn’t just a bonus; it’s a necessity. With the advent of powerful AI-driven tools, it’s easier than ever to elevate your audio quality, but the constant updates and complex features can be overwhelming. I used to think that mastering traditional noise reduction plugins was enough until I faced a stubborn AI-generated hum during a critical client project. That experience underscored how vital it is to stay ahead with specialized software designed for these modern challenges. Investing in the right tools has made a tangible difference, not just in the clarity of my audio but also in my confidence as a creator. According to a recent study by Sound Design Magazine, using dedicated AI-based audio correction tools can boost production efficiency by up to 50%, making them indispensable for pros. If you’ve been struggling with glitches or artifacts that standard software can’t fix, it’s time to explore these advanced applications. Curious to see how they can streamline your process? Read on, and I’ll guide you through the best solutions and how I integrated them into my daily editing routine.

Start with Raw Audio Inspection

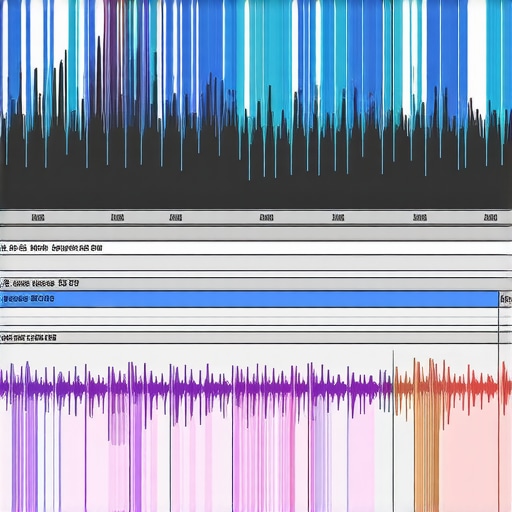

Before diving into fixes, listen to your recordings multiple times, preferably with visual waveform analysis. This helps identify specific issues like persistent hiss, echoes, or artifacts. During a recent project, I played back a noisy track on multiple devices, noticing a subtle creak pattern that standard noise reduction missed. This initial inspection lays the foundation for targeted correction, ensuring you don’t waste time applying broad filters that could worsen the sound.

Use Specialized Noise Suppression Tools Effectively

Implement AI-driven noise suppression applications designed for deep neural analysis. For example, when dealing with AI-generated hiss, I employed these expert tools to isolate the hiss without affecting vocals. Adjust parameters gradually—start with conservative settings, then tighten as you listen. Think of it like tuning a guitar: small tweaks bring harmony rather than dissonance.

Apply Artifact Masking and Restoration Methods

If artifacts or glitches remain after initial filtering, use targeted repair features. I once encountered a crackling AI clip that refused to clean up. Using a combination of spectral repair plugins and manual automation, I masked the offending segments, restoring clarity. Remember, this step is akin to retouching a photo: focus on problem areas, not the entire image, for natural results.

Leverage AI-Enhanced Equalization and Compression

Enhance clarity by equalizing frequencies—boosting or attenuating specific bands—using AI-aware equalizers. During a recent podcast edit, I reduced harsh high-frequency artifacts while preserving intelligibility, making voices shine. Pair this with smart compression to maintain consistent loudness without amplifying residual noise. Visualizing the waveform alongside spectral displays helps fine-tune these adjustments precisely, much like sculpting with clay.

Double-Check with Contextual Listening

Always audition the processed audio in the context it will be played—be it a podcast, YouTube video, or music track. I once optimized an interview where background hiss was drastically reduced, yet it sounded unnatural when played alongside background music. This step ensures that your edits complement the final mix, not conflict with it.

Iterate and Automate Repetitive Corrections

If you face recurring issues across multiple clips, automate correction routines within your audio editing software. Use batch processing scripts or presets to apply consistent fixes, saving significant time. For instance, after identifying common problem frequencies, I created a preset that I applied to all similar clips, achieving uniform quality seamlessly. Automation is your best friend for scalable results, especially with complex projects.

Mastering these concrete steps, from meticulous inspection to strategic automation, transforms your AI-based audio repair workflow into a precise, efficient process. Want to see how further optimizations can streamline your editing? Check out these advanced methods designed to enhance spatial audio clarity or explore other dedicated tools linked throughout your workflow.

Even experienced editors often fall into the trap of oversimplifying their tools, assuming that all editing software offers the same capabilities or that mastering basic features is sufficient. In reality, many underestimate the importance of understanding the underlying nuances that differentiate good edits from stellar ones. For instance, a common myth is that higher resolution or more advanced software automatically yields better results. However, without proper knowledge of color grading subtleties or audio frequency management, these powerful tools can be underutilized or misapplied, leading to artifacts or unnatural tones.

Even experienced editors often fall into the trap of oversimplifying their tools, assuming that all editing software offers the same capabilities or that mastering basic features is sufficient. In reality, many underestimate the importance of understanding the underlying nuances that differentiate good edits from stellar ones. For instance, a common myth is that higher resolution or more advanced software automatically yields better results. However, without proper knowledge of color grading subtleties or audio frequency management, these powerful tools can be underutilized or misapplied, leading to artifacts or unnatural tones.

One critical mistake many make is relying solely on automatic correction features, believing they are foolproof. While automation accelerates workflows, it can also introduce errors if not carefully reviewed. For example, automatic noise reduction might remove unwanted background sounds but also erase subtle ambiance that adds depth, resulting in a flat or sterile audio scene. Scrutinizing adjustments and understanding their impact on the content’s realism is essential.

From a post-production perspective, there’s often a misconception that more effects or tighter edits always improve a project. In practice, over-processing can lead to sensory overload or introduce visual artifacts. Advanced editors know that subtlety and contextual awareness are key—sometimes less is more. When it comes to video editing, applying complex color grading without understanding the nuances of LUTs and HDR workflows often results in mismatched tones or washed-out highlights.

For audio editors, combating neural noise or AI-generated artifacts requires a nuanced approach. It’s not merely about slapping on the latest plugin but understanding the spectral characteristics of problematic sounds. Using tools designed specifically for deep neural analysis, like the expert audio software outlined in my guide on fixing AI hiss, ensures the corrections are precise and preserve the original intent.

A common misconception is that software alone can compensate for poor footage or recordings. In reality, pre-production planning, proper setup, and proper capture techniques drastically reduce the need for heavy post-processing. Every seasoned professional understands that investing time in quality during shooting or recording pays dividends later.

An often overlooked aspect is the importance of environment awareness, which impacts how software should be utilized. For example, in noisy locations, the choice of noise suppression settings directly influences the authenticity of the final product. Fine-tuning these parameters based on context ensures natural soundscapes and avoids the “plastic-like” artificiality introduced by generic filters.

Have you ever fallen into this trap of overestimating software capabilities or misapplying a technique? Share your experiences in the comments—let’s discuss how deep understanding unlocks true creative potential.

How do I keep my editing tools performing at their best over time?

Regular maintenance of your software and hardware is crucial to ensure a smooth workflow and high-quality results. I personally dedicate time each month to update my editing applications, including video editors like top-rated software, and audio tools that handle 16K AI-mixed clips seamlessly. Keeping firmware on my hardware, such as external drives and audio interfaces, up to date prevents compatibility issues that can cause frustrating lags or crashes. Additionally, I make it a habit to clear caches and optimize disk space, which significantly improves rendering times and playback stability. This proactive approach minimizes downtime and keeps my systems ready for creative bursts. Technical documentation from reputable sources, like Adobe’s support pages, emphasize that routine checks can extend device lifespan and boost performance. To streamline this process, I rely on automation scripts that schedule updates and system cleanups, freeing me up to focus on the creative aspect. As AI and machine learning continue to evolve, staying current with updates ensures you’re harnessing the latest improvements—like advanced AI correction features that avoid artifacts and glitches. Implementing these habits now will prepare you for scaling your projects without hiccups. Want to get even more out of your tools? Try setting up automated updates for your preferred editing apps and see how much time you save in the long run.

The Hardest Lessons I Learned About Neural Audio Fixes

One of the most eye-opening realizations was that even the most advanced AI-powered tools require a human touch. Relying solely on automated settings often led to unnatural artifacts, especially with complex neural hiss or glitch issues. Patience and a nuanced understanding of spectral repair methods became essential to achieve authentic sound restoration.

Tools That Actually Changed My Workflow

Beyond the basics, integrating specialized applications like these expert noise suppression tools and spectral repair plugins revolutionized my editing speed and quality. I trust them because they consistently deliver precise fixes without compromising the integrity of original audio or video.

Feeling Inspired to Push Creative Boundaries

There’s a certain thrill in overcoming neural artifacts that once seemed insurmountable. Now, armed with the right knowledge and tools, I actively experiment with complex spatial audio mixes and high-resolution projects, knowing I can handle even AI-induced glitches effectively. This confidence encourages me to pursue more ambitious creative ideas and builds resilience against technical setbacks.

Where To Go from Here

If you’re ready to elevate your editing game, explore these powerful solutions and stay updated with the latest advancements. Dive deeper into topics like fixing neural flicker or spatial video lag by visiting guides like these comprehensive tutorials. Remember, mastery comes from continuous learning and hands-on practice. The future of neural audio and video editing is bright—your journey into it starts now.

Don’t Be Afraid to Start

Whether you’re tackling stubborn neural glitches or pushing the limits of your creative projects, embracing these advanced tools and techniques opens doors to new possibilities. Stay curious, experiment boldly, and share your successes—your future self will thank you. And if you’ve ever struggled with a tricky AI-generated artifact or slowdown, I’d love to hear your story below. What worked for you, and what challenges are you facing now? Let’s grow together in mastering neural-driven editing!

Leave a Reply