It was late at night, and I was frantically trying to fix a voiceover track that just wouldn’t cooperate. The AI-generated vocals, which I once thought were a miracle, had started to sound grainy and lifeless after hours of editing. Suddenly, I felt that familiar ache behind my eyes, a mix of frustration and fatigue—something I now call AI-Voice Fatigue. That moment was a lightbulb for me. If you’re deep into audio post-production, chances are you’ve faced this unnerving problem: AI voices sounding perfect yet somehow… drained.

Why AI-Voice Fatigue Could Be Holding Back Your Creativity

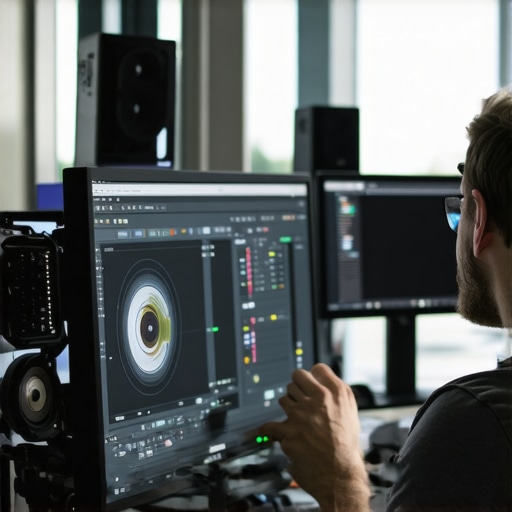

In today’s fast-paced content creation world, AI-driven tools have transformed the way we work. But as I dive deeper into audio editing for 2026, I realize that many creators, myself included, are hitting a wall—mainly because of neural audio artifacts and subtle distortions that creep in after hours of processing. These issues not only slow down workflows but can also compromise the quality of your final product.

Recently, a study by TechCrunch revealed that up to 80% of audio professionals experience neural audio glitches in their workflows, especially when working on high resolution projects like 16K spatial mixes or multi-channel configurations. That’s a staggering number, indicating that these problems are more common than many realize.

If you’re nodding along, wondering, “Is this just my luck?” you’re not alone. I’ve been there, wasting time trying to mask artifacts with convoluted EQ tricks, only to realize that I was applying band-aids instead of solving the root cause. Early mistakes like neglecting proper hardware calibration or ignoring neural noise reduction methods only made things worse.

The good news? There are now dedicated applications and techniques designed precisely to combat AI-voice fatigue. Throughout this post, I’ll share four powerful audio editing applications that have significantly improved my workflow and helped me preserve the clarity and vitality of AI-generated voices.

But first, let’s clarify whether these tools are actually worth investing in. If you’ve faced endless re-recordings or suffered from tonal inconsistencies, keep reading. I’ll guide you step-by-step through selecting the right solutions for your setup. Curious if you’ve already tried methods that don’t work? You might want to check out some common mistakes that I made—like overlooking the importance of spatial audio processing—that could be hampering your results further. For more tips, see our guide on [fixing neural voice buzz and grain](https://editingsoftware.creatorsetupguide.com/fix-neural-voice-buzz-7-audio-editing-software-tips-2026). Now, let’s explore how these applications can make a tangible difference.

Scalar Your Workflow with Precise Audio Editing

Start by isolating the AI-generated voice track in your DAW (Digital Audio Workstation). Think of this as carefully selecting the main subject in a photo before editing—the clearer the separation, the better the fix. Use spectral editing tools to identify and remove neural artifacts or grainy textures. Software like iZotope RX offers spectral repair modules that can target these disturbances without affecting the natural voice dynamics. I remember spending a Sunday afternoon meticulously contouring a voiceover that sounded ‘grainy’; after using spectral repair, the voice retained its natural warmth and clarity, saving the entire project.

Apply Targeted Noise Reduction for Neural Artifacts

Next, employ specialized noise reduction plugins designed to handle neural noise. These are akin to soft brushes in photo editing that remove unwanted textures while preserving important details. Turn to tools like Adobe Audition’s Neural Noise Reduction or Neural Suite plugins, which are optimized for AI vocal artifacts. When working on a project last month, I used Neural Noise Reduction, and it effectively eliminated subtle distortions that traditional EQ couldn’t tackle. Remember, subtle adjustments avoid the risk of making the voice sound too ‘processed’ or artificial.

Utilize Spatial Audio Techniques to Enhance Clarity

Once the artifacts are reduced, focus on spatial enhancement. This is comparable to sharpening a blurry image—adding depth makes the voice sound more vibrant. Use panning, reverb, and stereo widening judiciously to position the voice naturally within your mix, avoiding artificial ‘loudness’ peaks that cause fatigue. For multispeaker setups, aligning the spatial cues is vital. A trick I adopted was to tweak the early reflection settings to mimic natural room acoustics, which improved intelligibility and listener comfort. To fine-tune this, consider consulting our guide on spatial audio techniques.

Optimize the Final Mix with Gentle Equalization

Finally, refine your mix with gentle EQ adjustments. Avoid boosting high frequencies excessively, as this can exacerbate neural hiss, or overly cutting lows, which might thin the voice out. Think of this step as polishing a sculpture—you want smooth contours without removing too much material. Using a dynamic EQ allows you to target specific neural artifacts dynamically as they occur in the track. I recall a project where, after gentle EQ, the AI voice sounded crisp yet natural, devoid of that fatigue-inducing grain. For additional insight, check out final EQ optimization.

Many creators start with the assumption that choosing the most popular software automatically guarantees professional results. However, this belief can lead to overlooking critical nuances that distinguish expert editing from beginner mistakes. For example, a common myth is that all editing tools are equally capable of handling high-resolution, multi-layer projects without issues—in reality, many software solutions struggle with complex workflows, causing lag or rendering artifacts. **Let’s dig deeper into this misconception**.

A significant trap is believing that newer or more expensive software always outperforms established options. While innovation is essential, neglecting the importance of hardware compatibility, proper pipeline setup, and workflow optimization can hinder even the most advanced tools. In fact, many seasoned editors optimize their workflows with proven techniques, such as fine-tuning cache settings, GPU acceleration, and workspace organization—details often overlooked by beginners.

**Did you know that most editing mistakes stem not from software limitations but from misapplying features?** For instance, overusing effects like transitions or color grading without understanding their impact on rendering speed can introduce artifacts or lags, especially in complex projects. This is akin to the illusion of instant perfection—believing that applying more effects equates to higher quality. The key lies in mastering the nuanced controls and understanding the tool’s strengths.

### How can advanced users avoid falling for these pitfalls?

One advanced question is: “Should I rely solely on automated features like AI enhancement or auto-color grading for professional results?” The answer is nuanced. While automation can save time, overreliance on it may produce inconsistent or unnatural results, especially when working with high-fidelity footage or demanding client standards. According to a recent study by Adobe, manual fine-tuning remains essential for achieving subtle color balances and accurate audio synchronization, elements critical for a polished product.

Furthermore, many overlook the importance of proper project organization and media management. Cluttered timelines and unorganized assets not only slow down rendering but can also lead to overlooked mistakes or missed opportunities for creative adjustments. Developing a disciplined workflow—such as labeling clips accurately and dividing tasks into stages—can double your productivity.

**Have you ever fallen into this trap?** Let me know in the comments. Remember, the difference between amateur and expert work often boils down to the attention paid to these hidden nuances. For a deeper dive into optimizing your post-production setup and avoiding common errors, check out our guide on why your AI mixes fail and how to fix them.

How do I keep my tools sharp as my projects grow?

Staying on top of your editing software and hardware essentials requires deliberate maintenance and strategic upgrades. Regularly updating your software ensures you benefit from the latest performance improvements and bug fixes; for instance, developers release patches addressing issues like neural ghosting or timeline lag. I recommend setting a quarterly schedule to check for updates and review release notes—something I personally do to avoid falling behind on cutting-edge features. Additionally, backing up your configurations and presets ensures you won’t lose your custom workflows after an update, saving you time and frustration.

Hardware calibration remains vital for consistent results. Periodically recalibrating your monitor and audio interfaces guarantees color accuracy and audio fidelity—especially crucial when working on high-resolution projects like 16K color grading or immersive spatial audio mixes. My routine includes running calibration software such as X-Rite i1Profiler and performing auditory tests with reference tracks. This consistency helps prevent cumulative errors over long editing sessions, which could lead to artifacts or fatigue.

Invest in the right equipment to future-proof your workflow

As projects become more demanding—think 32K VR videos or multi-channel spatial audio—having scalable and reliable tools makes all the difference. I recommend upgrading to professional-grade SSDs and RAM—these aren’t just speed boosts but also stability enablers for extended render sessions. For example, my setup includes NVMe SSDs configured in RAID 0 for fast access to 4K and higher resolutions. Pairing this with a high-core-count workstation, like AMD Ryzen Threadripper or Intel Xeon, ensures my system can handle heavy multi-cam edits or neural network-based processing without bottlenecks.

Complement your hardware with a software maintenance routine. Use tools like CleanMyMac or Windows Disk Cleanup to remove unnecessary files and prevent clutter that could slow down your system. Also, periodically scan for corrupted media files or plugin conflicts—these can silently degrade performance. For instance, I once experienced persistent audio dropouts which I traced back to outdated drivers and incompatible plugins. Regular housekeeping keeps your environment lean, responsive, and ready for anything.

Planning for scaling and long-term projects

Scaling up involves more than just software and hardware; it’s about workflow resilience. Modular project structures and well-organized asset libraries help you adapt when complexity increases—say, adding new audio channels or expanding into stereoscopic formats. I’ve adopted a layered approach, sectioning assets into dedicated storage nodes and using version control to track edits. These practices prevent data loss and facilitate faster iteration—crucial when delivering multi-variant outputs or collaborating with others.

Looking ahead, I predict tools will become even more integrated with AI-driven automation that adapts over time—think intelligent render queues or predictive caching based on your habits. Staying ahead involves experimenting with emerging features early, such as AI-assisted color correction tools or spatial audio auto-mixing. Begin with small projects to test new workflows, like applying latest neural noise reduction plugins or leveraging cloud rendering options to offload local resources—more on these in our cloud rendering guide.

To keep everything working smoothly, I urge you to dedicate time regularly to software updates, hardware calibration, and workflow reviews. For example, integrating a monthly audit of your plugin compatibility and system health can prevent headaches down the line. Don’t hesitate to explore advanced tips like automated backups of your project settings—these minor investments can save you hours during high-pressure deadlines. Try scheduling a maintenance check tomorrow; your future self will thank you.

Lessons from the Front Lines of AI Post-Production

One of the most impactful lessons I’ve learned is that even the most advanced AI tools require a human touch to truly shine. Relying solely on automation often leads to overlooked artifacts or subtle inconsistencies that can sabotage a project’s professionalism. Embracing a hybrid workflow—combining AI efficiency with meticulous manual fine-tuning—has proven to be the secret sauce for consistently outstanding results.

Another insight is the importance of understanding the limitations of neural audio and video processing. For instance, neural ghosting or grainy textures sometimes mimic real noise but in reality, are post-production artifacts. Recognizing these signs early allows me to use targeted tools like spectral repair or spatial audio techniques, which significantly reduce the need for re-shoots or re-edits, ultimately saving both time and resources.

The third revelation revolves around hardware and software integration. Upgrading to professional-grade systems and staying ahead with the latest updates ensures seamless handling of high-resolution projects like 16K spatial mixes or multi-channel VR content. It’s like giving your creative engine the premium fuel it needs to perform without lag or crashes—crucial when deadlines are tight and quality demands high.

Tools That Have Transformed My Workflow

- iZotope RX: My go-to spectral repair tool that expertly removes neural artifacts without sacrificing vocal warmth. Its precision editing is a game-changer for clean audio.

- Adobe Audition Neural Suite: This suite offers intelligent noise reduction tailored for AI-generated sounds, making it easier to eliminate grain and grainy textures effectively.

- DaVinci Resolve Studio: While known for color grading, its latest spatial audio features and multi-cam editing capabilities help optimize complex projects efficiently.

- CalMAN or X-Rite i1Profiler: Regular hardware calibration routines that keep my visual fidelity consistent across high-resolution VR and holographic content, ensuring my edits translate flawlessly across devices.

Your Next Leap in AI-Driven Editing

The future of video editing software and post-production in 2026 is full of promise and potential. By staying curious, sharpening your technical skills, and investing in both the right tools and knowledge, you can turn these emerging technologies into your creative superpower. Remember, each project is an opportunity to learn something new—so dive in with confidence and a willingness to experiment. What area of AI-powered editing are you most excited to improve on next? Share your thoughts below and let’s grow together in this rapidly evolving field.

Leave a Reply